The responsible Use of Generative AI According to the UNESCO International Institute for Higher Education

The responsible use of generative AI requires a basic understanding of its opportunities, limits and requirements. Many of the seemingly intuitive AI tools are not neutral tools. Their use requires a high degree of responsibility - especially in an academic environment - particularly with regard to data protection, transparency and scientific integrity. The following section summarizes key principles that apply regardless of the specific area of application.

Before AI is used in studies, teaching or work contexts, a fundamental question arises:

When is the use of generative AI sensible and responsible?

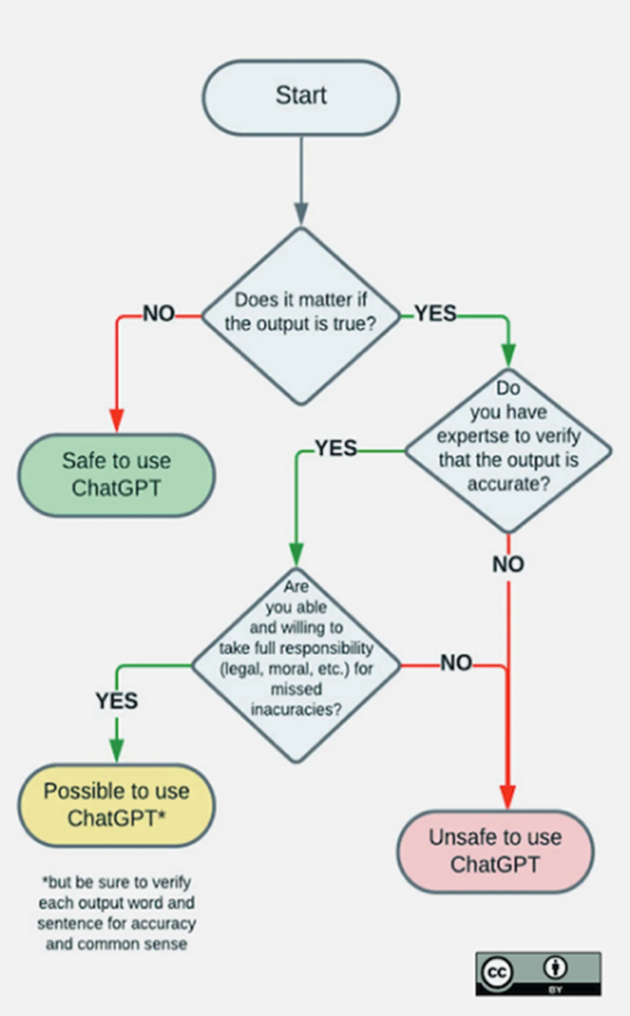

The decision matrix of the UNESCO International Institute for Higher Education provides an initial orientation (see Figure 1). It is based on the example of ChatGPT, but it can be applied to several generative AI systems - regardless of whether texts, images, code or speech are generated. The central considerations for the use of generative AI are:

- Is it important that the content is correct?

- Is it possible for me to check the accuracy of the content?

- Are there any legal or ethical reasons (particularly with regard to data protection and copyright) that argue against the use of AI in the specific use case?

- Do I have the necessary technical expertise to assess the accuracy of the AI results?

- Am I prepared to take responsibility for any errors?

Depending on the context, this results in the three different utilisation areas UA1 to UA3:

Utilisation area 1

UA1 generative AI can be used "uncritically" to support creative work and generate ideas, for example - in other words, wherever initial drafts are created and then developed further manually. Errors, therefore, have no serious consequences.

These include, for example1:

- Brainstorming and idea development for projects or presentations,

- Support in developing new concepts or strengthening existing ideas,

- Creation of templates for quiz questions/teaching materials that are further processed,

- Initial translations of teaching texts or communication materials into different languages; correction of spelling and grammar.

1Although the use of AI is generally considered unproblematic in this context, it may nevertheless be excluded depending on the specific circumstances. For example, initial translations by the examiner or AI-assisted brainstorming in the context of a scientific publication may generally not be permitted by conference organizers. Therefore, each individual case should be examined to determine whether overarching regulations or guidelines apply that restrict or prohibit the use of AI.

Utilisation area 2

UA2 use is "possible subject to reservation" if the users are able to check the results and take responsibility for them on the basis of their expertise. In order to be able to take responsibility for the results, it must be ruled out in particular that they violate personal rights, data privacy law, licence and copyright law or criminal law.

It should always be noted that even the provision of data (e.g. by uploading it to an AI system) on servers that are not adequately protected under data protection or copyright law can constitute a violation of applicable legal requirements (see compliance with data protection and copyright law).

Typical examples of use are

- Summarising articles for an initial overview of content or structure,

- Analysing texts or data, e.g. to identify patterns or correlations,

- Suggestions for formulations,

- Feedback on style and structure for better readability,

- Individualisation of materials for specific learning needs,

- Feedback on or simplification of programming and statistical analysis code.

Utilisation area 3

UA3 "Not suitable" is the use of generative AI in areas in which the results are not independently checked for accuracy and appropriateness or if the violation of (data protection or copyright) legal or ethical requirements cannot be ruled out. Since generative AI has no normative judgement, it cannot independently take moral, legal or institutional requirements into account. In addition, due to the black box nature of currently available AI solutions, there is a lack of transparency or traceability in the derivation of results.

This can lead to biased or discriminatory results that remain unrecognised and are incorporated into decisions, e.g. by reproducing existing prejudices in training data or by disadvantaging certain groups. In this case, users can neither check the accuracy and appropriateness of the results nor take responsibility.

Therefore, typical application examples in which the use of generative AI must be avoided are, for example:

- Automated AI-based assessments of examination results without human review (e.g. automatic grading of seminar papers or theses),

- Processing of personal, confidential or copyright-protected data (e.g. use of generative AI to analyse non-released, copyright-protected teaching materials)

- Automated decisions in selection processes (e.g. applications, scholarships).